I hate parsing files, but it is something that I have had to do at the start of nearly every project. Parsing is not easy, and it can be a stumbling block for beginners. However, once you become comfortable with parsing files, you never have to worry about that part of the problem. That is why I recommend that beginners get comfortable with parsing files early on in their programming education. This article is aimed at Python beginners who are interested in learning to parse text files.

In this article, I will introduce you to my system for parsing files. I will briefly touch on parsing files in standard formats, but what I want to focus on is the parsing of complex text files. What do I mean by complex? Well, we will get to that, young padawan.

For reference, the slide deck that I use to present on this topic is available here. All of the code and the sample text that I use is available in my Github repo here.

Why parse files?

First, let us understand what the problem is. Why do we even need to parse files? In an imaginary world where all data existed in the same format, one could expect all programs to input and output that data. There would be no need to parse files. However, we live in a world where there is a wide variety of data formats. Some data formats are better suited to different applications. An individual program can only be expected to cater for a selection of these data formats. So, inevitably there is a need to convert data from one format to another for consumption by different programs. Sometimes data is not even in a standard format which makes things a little harder.

So, what is parsing?

- Parse

- Analyse (a string or text) into logical syntactic components.

I don’t like the above Oxford dictionary definition. So, here is my alternate definition.

- Parse

- Convert data in a certain format into a more usable format.

The big picture

With that definition in mind, we can imagine that our input may be in any format. So, the first step, when faced with any parsing problem, is to understand the input data format. If you are lucky, there will be documentation that describes the data format. If not, you may have to decipher the data format for yourselves. That is always fun.

Once you understand the input data, the next step is to determine what would be a more usable format. Well, this depends entirely on how you plan on using the data. If the program that you want to feed the data into expects a CSV format, then that’s your end product. For further data analysis, I highly recommend reading the data into a pandas DataFrame.

If you a Python data analyst then you are most likely familiar with pandas. It is a Python package that provides the DataFrame class and other functions to do insanely powerful data analysis with minimal effort. It is an abstraction on top of Numpy which provides multi-dimensional arrays, similar to Matlab. The DataFrame is a 2D array, but it can have multiple row and column indices, which pandas calls MultiIndex, that essentially allows it to store multi-dimensional data. SQL or database style operations can be easily performed with pandas (Comparison with SQL). Pandas also comes with a suite of IO tools which includes functions to deal with CSV, MS Excel, JSON, HDF5 and other data formats.

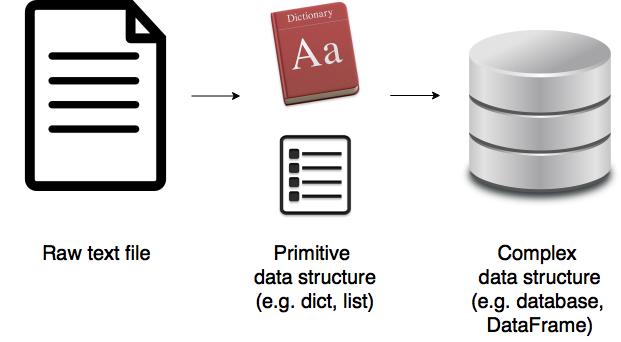

Although, we would want to read the data into a feature-rich data structure like a pandas DataFrame, it would be very inefficient to create an empty DataFrame and directly write data to it. A DataFrame is a complex data structure, and writing something to a DataFrame item by item is computationally expensive. It’s a lot faster to read the data into a primitive data type like a list or a dict. Once the list or dict is created, pandas allows us to easily convert it to a DataFrame as you will see later on. The image below shows the standard process when it comes to parsing any file.

Parsing text in standard format

If your data is in a standard format or close enough, then there is probably an existing package that you can use to read your data with minimal effort.

For example, let’s say we have a CSV file, data.txt:

a,b,c

1,2,3

4,5,6

7,8,9

You can handle this easily with pandas.

|

|

a b c

0 1 2 3

1 4 5 6

2 7 8 9

Parsing text using string methods

Python is incredible when it comes to dealing with strings. It is worth internalising all the common string operations. We can use these methods to extract data from a string as you can see in the simple example below.

|

|

Step 0: ['Names', ' Romeo, Juliet']

Step 1: Romeo, Juliet

Step 2: [' Romeo', ' Juliet']

Step 3: ['Romeo', 'Juliet']

Final result in one go: ['Romeo', 'Juliet']

Parsing text in complex format using regular expressions

As you saw in the previous two sections, if the parsing problem is simple we might get away with just using an existing parser or some string methods. However, life ain’t always that easy. How do we go about parsing a complex text file?

Step 1: Understand the input format

|

|

Sample text

A selection of students from Riverdale High and Hogwarts took part in a quiz.

Below is a record of their scores.

School = Riverdale High

Grade = 1

Student number, Name

0, Phoebe

1, Rachel

Student number, Score

0, 3

1, 7

Grade = 2

Student number, Name

0, Angela

1, Tristan

2, Aurora

Student number, Score

0, 6

1, 3

2, 9

School = Hogwarts

Grade = 1

Student number, Name

0, Ginny

1, Luna

Student number, Score

0, 8

1, 7

Grade = 2

Student number, Name

0, Harry

1, Hermione

Student number, Score

0, 5

1, 10

Grade = 3

Student number, Name

0, Fred

1, George

Student number, Score

0, 0

1, 0

That’s a pretty complex input file! Phew! The data it contains is pretty simple though as you can see below:

Name Score

School Grade Student number

Hogwarts 1 0 Ginny 8

1 Luna 7

2 0 Harry 5

1 Hermione 10

3 0 Fred 0

1 George 0

Riverdale High 1 0 Phoebe 3

1 Rachel 7

2 0 Angela 6

1 Tristan 3

2 Aurora 9

The sample text looks similar to a CSV in that it uses commas to separate out some information. There is a title and some metadata at the top of the file. There are five variables: School, Grade, Student number, Name and Score. School, Grade and Student number are keys. Name and Score are fields. For a given School, Grade, Student number there is a Name and a Score. In other words, School, Grade, and Student Number together form a compound key.

The data is given in a hierarchical format. First, a School is declared, then a Grade. This is followed by two tables providing Name and Score for each Student number. Then Grade is incremented. This is followed by another set of tables. Then the pattern repeats for another School. Note that the number of students in a Grade or the number of classes in a school are not constant, which adds a bit of complexity to the file. This is just a small dataset. You can easily imagine this being a massive file with lots of schools, grades and students.

It goes without saying that the data format is exceptionally poor. I have done this on purpose. If you understand how to handle this, then it will be a lot easier for you to master simpler formats. It’s not unusual to come across files like this if have to deal with a lot of legacy systems. In the past when those systems were being designed, it may not have been a requirement for the data output to be machine readable. However, nowadays everything needs to be machine-readable!

Step 2: Import the required packages

We will need the Regular expressions module and the pandas package. So, let’s go ahead and import those.

|

|

Step 3: Define regular expressions

In the last step, we imported re, the regular expressions module. What is it though?

Well, earlier on we saw how to use the string methods to extract data from text. However, when parsing complex files, we can end up with a lot of stripping, splitting, slicing and whatnot and the code can end up looking pretty unreadable. That is where regular expressions come in. It is essentially a tiny language embedded inside Python that allows you to say what string pattern you are looking for. It is not unique to Python by the way (treehouse).

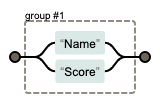

You do not need to become a master at regular expressions. However, some basic knowledge of regexes can be very handy in your programming career. I will only teach you the very basics in this article, but I encourage you to do some further study. I also recommend regexper for visualising regular expressions. regex101 is another excellent resource for testing your regular expression.

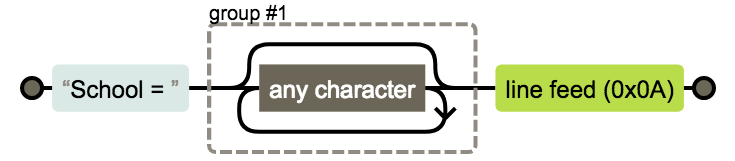

We are going to need three regexes. The first one, as shown below, will help us to identify the school. Its regular expression is School = (.*)\n. What do the symbols mean?

.: Any character*: 0 or more of the preceding expression(.*): Placing part of a regular expression inside parentheses allows you to group that part of the expression. So, in this case, the grouped part is the name of the school.\n: The newline character at the end of the line

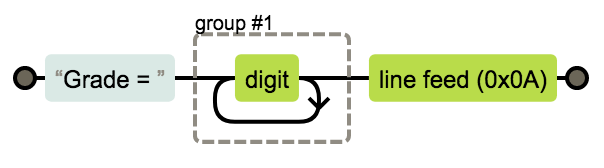

We then need a regular expression for the grade. Its regular expression is Grade = (\d+)\n. This is very similar to the previous expression. The new symbols are:

\d: Short for [0-9]+: 1 or more of the preceding expression

Finally, we need a regular expression to identify whether the table that follows the expression in the text file is a table of names or scores. Its regular expression is (Name|Score). The new symbol is:

|: Logical or statement, so in this case, it means ‘Name’ or ‘Score.’

We also need to understand a few regular expression functions:

re.compile(pattern): Compile a regular expression pattern into aRegexObject.

A RegexObject has the following methods:

match(string): If the beginning of string matches the regular expression, return a correspondingMatchObjectinstance. Otherwise, returnNone.search(string): Scan through string looking for a location where this regular expression produced a match, and return a correspondingMatchObjectinstance. ReturnNoneif there are no matches.

A MatchObject always has a boolean value of True. Thus, we can just use an if statement to identify positive matches. It has the following method:

group(): Returns one or more subgroups of the match. Groups can be referred to by their index.group(0)returns the entire match.group(1)returns the first parenthesized subgroup and so on. The regular expressions we used only have a single group. Easy! However, what if there were multiple groups? It would get hard to remember which number a group belongs to. A Python specific extension allows us to name the groups and refer to them by their name instead. We can specify a name within a parenthesized group(...)like so:(?P<name>...).

Let us first define all the regular expressions. Be sure to use raw strings for regex, i.e., use the subscript r before each pattern.

|

|

Step 4: Write a line parser

Then, we can define a function that checks for regex matches.

|

|

Step 5: Write a file parser

Finally, for the main event, we have the file parser function. It is quite big, but the comments in the code should hopefully help you understand the logic.

|

|

Step 6: Test the parser

We can use our parser on our sample text like so:

|

|

Name Score

School Grade Student number

Hogwarts 1 0 Ginny 8

1 Luna 7

2 0 Harry 5

1 Hermione 10

3 0 Fred 0

1 George 0

Riverdale High 1 0 Phoebe 3

1 Rachel 7

2 0 Angela 6

1 Tristan 3

2 Aurora 9

This is all well and good, and you can see by comparing the input and output by eye that the parser is working correctly. However, the best practice is to always write unittests to make sure your code is doing what you intended it to do. Whenever you write a parser, please ensure that it’s well tested. I have gotten into trouble with my colleagues for using parsers without testing before. Eeek! It’s also worth noting that this does not necessarily need to be the last step. Indeed, lots of programmers preach about Test Driven Development. I have not included a test suite here as I wanted to keep this tutorial concise.

Is this the best solution?

I have been parsing text files for a year and perfected my method over time. Even so, I did some additional research to find out if there was a better solution. Indeed, I owe thanks to various community members who advised me on optimising my code. The community also offered some different ways of parsing the text file. Some of them were clever and exciting. My personal favourite was this one. I presented my sample problem and solution at the forums below:

If your problem is even more complex and regular expressions don’t cut it, then the next step would be to consider parsing libraries. Here are a couple of places to start with:

- Parsing Horrible Things with Python: A PyCon lecture by Erik Rose looking at the pros and cons of various parsing libraries.

- Parsing in Python: Tools and Libraries: Tools and libraries that allow you to create parsers when regular expressions are not enough.

Conclusion

Now that you understand how difficult and annoying it can be to parse text files, if you ever find yourselves in the privileged position of choosing a file format, choose it with care. Here are Stanford’s best practices for file formats.

I’d be lying if I said I was delighted with my parsing method, but I’m not aware of another way, of quickly parsing a text file, that is as beginner friendly as what I’ve presented above. If you know of a better solution, I’m all ears! I have hopefully given you a good starting point for parsing a file in Python! I spent a couple of months trying lots of different methods and writing some insanely unreadable code before I finally figured it out and now I don’t think twice about parsing a file. So, I hope I have been able to save you some time. Have fun parsing text with python!